Scala functional programming features and more!

Overview

In this tutorial, we will highlight the main features that you will benefit from when adopting the Scala language.

From the previous tutorial, we saw that Scala is both a Functional and Object Oriented language. It should therefore not be surprising that by adopting Scala you will benefit from functional programming constructs as well as features of object oriented programming.

If you are coming from a pure Object Oriented background, hearing a lot of buzzwords around functional programming may at first be a bit scary. However, when learning Scala, it is important that you take the time to embrace the functional aspects of the language so that you can benefit from both Object Oriented and functional programming.

Without any further delay, let us go through the main features of Scala below. I hope you are as excited as I am :)

Steps

1. Functional programming

So what is a function? I'm pretty sure you must be familiar with Albert Einstein infamous E = MC² equation. In other words, we could define a function to calculate Energy by taking the input M for the mass and multiplying by a constant C² which represents the speed of light. Wow, that was a lot of physics in just one line :)

So what is a function? I'm pretty sure you must be familiar with Albert Einstein infamous E = MC² equation. In other words, we could define a function to calculate Energy by taking the input M for the mass and multiplying by a constant C² which represents the speed of light. Wow, that was a lot of physics in just one line :)

What's important here is that we've just defined a function that has no side effects. Side effect is a common buzzword in functional programming and it simply means that when defining a function it is important that the function does only what it is meant to do . In brief, the function should not have any hidden internal behaviour.

If our energy function for example calculated the energy for a given mass and then went off to buy a Physics for Dummies book :) from Amazon, the Amazon purchase logic is really a hidden behaviour which should be encapsulated into another function.

2. Mutations … it's a bad thing!

If you have worked on large enterprise codebase with thousands of lines of code, I'm pretty sure that you've had more than just a few cups of coffee :) to get you through solving some particular bug in the system.

If you have worked on large enterprise codebase with thousands of lines of code, I'm pretty sure that you've had more than just a few cups of coffee :) to get you through solving some particular bug in the system.

Flipping the coin over. What if you were solving the same bug, but your thousands of lines of code had no mutations to your variables! As we will see in upcoming tutorials from Chapter 2, Scala highly encourages immutability as a first class citizen in the language.

Say you had defined a variable called cupOfCoffeeEnergy which was initialized from the energy function in Step 1. Then, regardless of where you are in your thousands of lines of code, you know that the value of your cupOfCoffeeEnergy will be exactly the same!

Therefore, if your bug was a wrong value being represented for the variable cupOfCoffeeEnergy, then the solution would be to simply step through the energy function from Step 1 regardless of your thousands of lines of code!

When we combine Step 1 and Step 2 such that we create functions with no mutations and no side effects, we end up with Pure Functions!

3. Composing functions

As we've already mentioned from Steps 1 and 2, we should strive to encapsulate functions in a strict mathematical sense. In doing so we end up with a bunch of functions that can be freely mixed in with other functions to compose even more functions.

As we've already mentioned from Steps 1 and 2, we should strive to encapsulate functions in a strict mathematical sense. In doing so we end up with a bunch of functions that can be freely mixed in with other functions to compose even more functions.

The best analogy here is to quote Martin Odersky: "... think lego bricks!" For more details see the link in the Tip section below.

4. Higher Order functions

In addition to the lego-style for mixing in functions as described in Step 3 above, functions in Scala are by design first class citizens. As such, in Scala you can create higher order functions which are functions that take other functions as parameters.

In addition to the lego-style for mixing in functions as described in Step 3 above, functions in Scala are by design first class citizens. As such, in Scala you can create higher order functions which are functions that take other functions as parameters.

5. Pattern Matching

No functional programming language would be complete without pattern matching :) Sure, you could achieve the same behaviour without using a functional language. However, let us consider what happens in a Big Data processing pipeline:

No functional programming language would be complete without pattern matching :) Sure, you could achieve the same behaviour without using a functional language. However, let us consider what happens in a Big Data processing pipeline:

- You load data in segments or partitions

- You filter for some entity

- You do some aggregation or computation

- You filter some more

- You output or save the result to some database.

NOTE:

- We've just showed how Apache Spark works!

- With pattern matching being a core feature of Scala, you can pattern match pretty much anywhere in the above pipeline!

6. Asynchronous and parallel programming

Asynchronous operations are greatly simplified with the use of futures. In addition, you can just easily compose and sequence futures similar to composing functions.

Asynchronous operations are greatly simplified with the use of futures. In addition, you can just easily compose and sequence futures similar to composing functions.

If instead you need grid computing capabilities, then using Akka actors would be just what the Scala doctor recommends :)

7. Dependency Injection

Being an Object Oriented programming language, you have the usual type hierarchies as you'd expect from an Object Oriented language. However, Scala brings new meaning to dependency injection as first class citizen using features such as traits and implicits.

Being an Object Oriented programming language, you have the usual type hierarchies as you'd expect from an Object Oriented language. However, Scala brings new meaning to dependency injection as first class citizen using features such as traits and implicits.

8. Extensible language

The language comes with built-in features such as implicits, operator overloading, macros etc which allow you to create other Domain Specific Language, short for DSL. This is a great introduction to Step 9 below presenting a snapshot of some of the major tools, frameworks, DSL etc which are living proofs of how the Scala language was designed from the ground up to be extensible.

The language comes with built-in features such as implicits, operator overloading, macros etc which allow you to create other Domain Specific Language, short for DSL. This is a great introduction to Step 9 below presenting a snapshot of some of the major tools, frameworks, DSL etc which are living proofs of how the Scala language was designed from the ground up to be extensible.

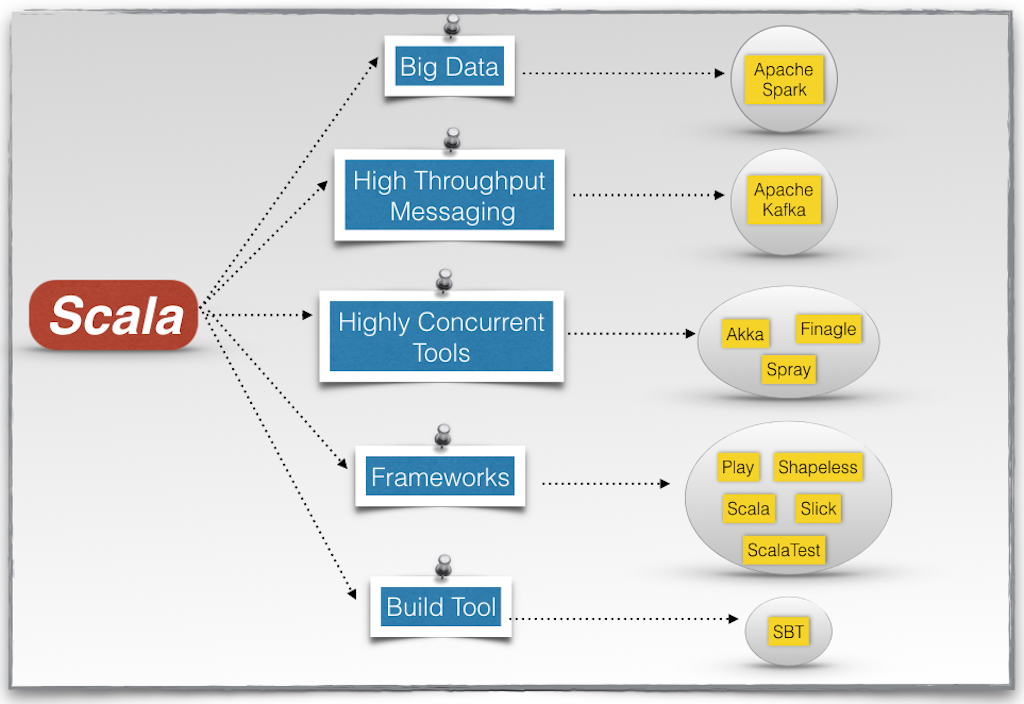

9. The Scala ecosystem

The Scala ecosystem has matured considerably and rather quickly over the past years. The picture below shows a snapshot of the most popular tools and frameworks written in Scala. It certainly reinforces Step 8 above with regards to the extensibility of the language.

The Scala ecosystem has matured considerably and rather quickly over the past years. The picture below shows a snapshot of the most popular tools and frameworks written in Scala. It certainly reinforces Step 8 above with regards to the extensibility of the language.

NOTE:

- Big Data:

Apache Spark is a leading open sourced platform for large scale data processing. - High Throughput Messaging:

Kafka is a high throughput distributed messaging system. - Highly Concurrent Systems:

- Frameworks:

- Play from Lightbend (formerly known as Typesafe) allows you to easily build scalable web applications.

- Shapeless brings a lot of added functionality when it comes to dealing with types.

- ScalaTest allows you to easily test your Scala applications.

- Scalaz provides additional semantics for functional programming.

- Slick is a rich data access layer

- Build Tool:

- SBT is a popular build tool when developing Scala applications.

10. Mixing Java code with Scala

As we will see in upcoming tutorials, when making the switch to Scala, you do not have to give up on your existing Java libraries.

As we will see in upcoming tutorials, when making the switch to Scala, you do not have to give up on your existing Java libraries.

Did we forget to mention that Scala provides type-safety? Indeed, it does!

This concludes our tutorial on Scala functional programming features and more! and I hope you've found it useful!

Stay in touch via Facebook and Twitter for upcoming tutorials!

Don't forget to like and share this page :)

Summary

In this article, we went over the following:

- Functional programming

- Mutations

- Composing functions

- Higher order functions

- Pattern matching

- Asynchronous and parallel programming

- Dependency Injection

- Extensible language

- The Scala ecosystem

- Mixing Java and Scala

Tip

- This tutorial was inspired by the presentation from Martin Odersky. The talk is a bit long but I highly recommend you watch at least the first 30 minutes of it as Martin Odersky does an awesome job at presenting the Scala language.

- For additional information on Scala, you can refer to the official site.

- If you are new to Scala or programming in general, the above Scala ecosystem snapshot can feel overwhelming. And you are absolutely right!

I have been fortunate enough to work with most if not all of the above mentioned tools. Sure you can learn some basic Scala and jump right onto using these tools.

But, the more comfortable you are with the inner workings of Scala, I'm pretty sure that you will be able to master any of the above tools much more easily! So I hope you are super excited about the upcoming tutorials as I am :)

Source Code

The source code is available on the allaboutscala GitHub repository.

What's Next

If you have gone through the previous tutorial as well as this one, you could now proceed to Chapter 1 which will be dedicated to getting familiar with the IntelliJ IDE for developing Scala applications.

Stay tuned!